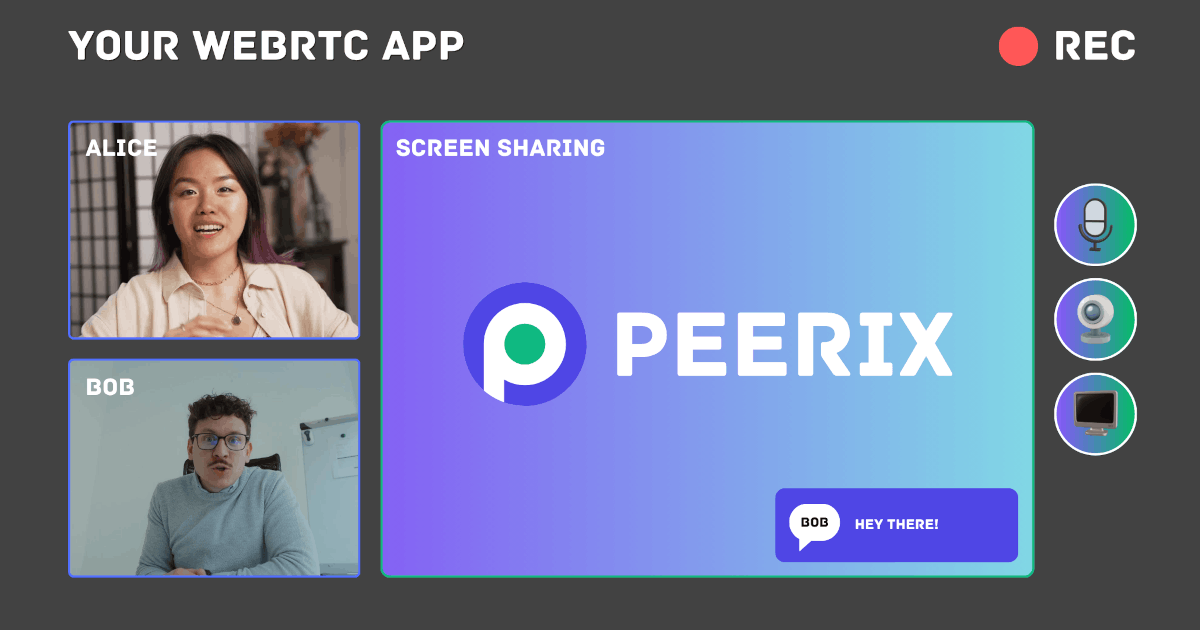

Today, I’m excited to introduce Peerix, a JavaScript/TypeScript library designed to simplify WebRTC development. Peerix abstracts away the complexities of WebRTC, allowing developers to focus on building their applications without worrying about the underlying signaling and peer connection management. With Peerix, you can easily create peer-to-peer applications for video conferencing, file sharing, gaming, and more. Whether you’re a seasoned WebRTC developer or just getting started, Peerix provides a straightforward API to help you get up and running quickly. Check out the comprehensive documentation and start building your next WebRTC application with Peerix today!

Last time, I used LLM models frequently in both my work and personal life. They are useful tools that can increase productivity and awareness. However, I started noticing that, from time to time, the chats would become hallucinatory in an interesting manner, which somehow affects me. When an LLM doesn’t have enough knowledge about something, it fills the gaps with incorrect information that better fits your context or request. Even if you ask about the same thing over time using different words and without providing previous context, the model may respond with similar hallucinations convincingly and in great detail. This behavior can be dangerous. If you trust its answers and see the same information repeatedly, you may start to believe it’s true. Actually, if you notice, you can point out the mistake to the LLM. It will probably admit the mistake. But what’s most interesting? If you point out the correct answer provided by the LLM but then consider it to be a mistake, the LLM will agree with you in some cases. This can lead to misinformation and reinforce the perception of false information in your memory.

The New Year 🎄 is almost here, and what better way to celebrate than with a fun coding project? Today, I’m excited to share a simple Time Warp Scan implementation using pure JavaScript and HTML5 Canvas. Have fun, and happy New Year! May your coding be productive.

The pace of AI development is exhilarating, with new models and capabilities emerging constantly. Recently, I upgraded my PC with a new AMD GPU and have been exploring its power with local Large Language Model (LLM) tasks. Today, I’m taking on a far more complex challenge.

I set out to create an entire five-minute AI-generated podcast using only open-source models and tools. The entire process ran on my computer, completely bypassing expensive, privacy-compromising cloud services. The goal was to test the absolute limits of quality and feasibility for a fully self-hosted media production.

The result? The “Humanless Podcast”. Take a look at what came out of this experiment (click to play).

I will now walk you through the entire, eight-step process of creating a podcast like this, from the initial script to the final video.

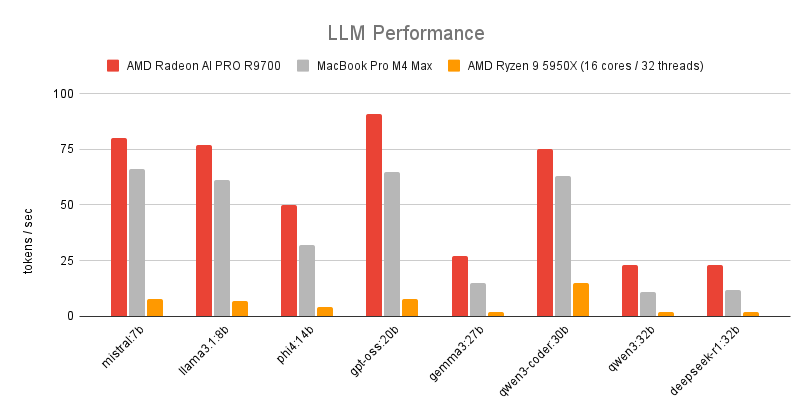

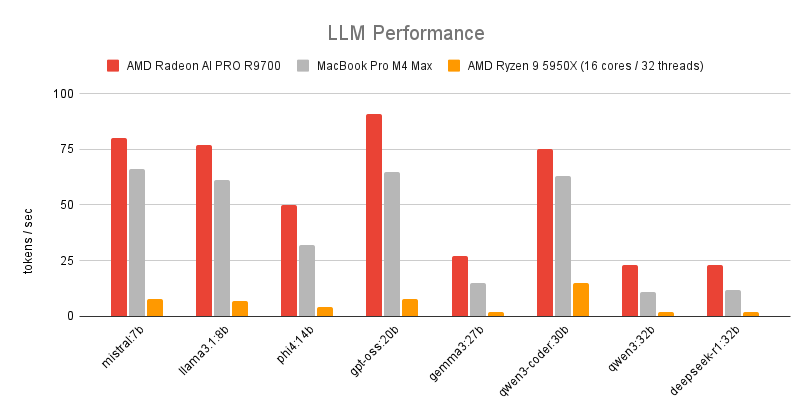

Recently, I acquired an AMD Radeon AI PRO R9700 to enhance my machine learning and development setup. It is a powerful GPU designed for professional workloads, including machine learning and AI applications. In this post, we explore the performance of large language models (LLMs) on the R9700, highlighting its capabilities and benchmarks.

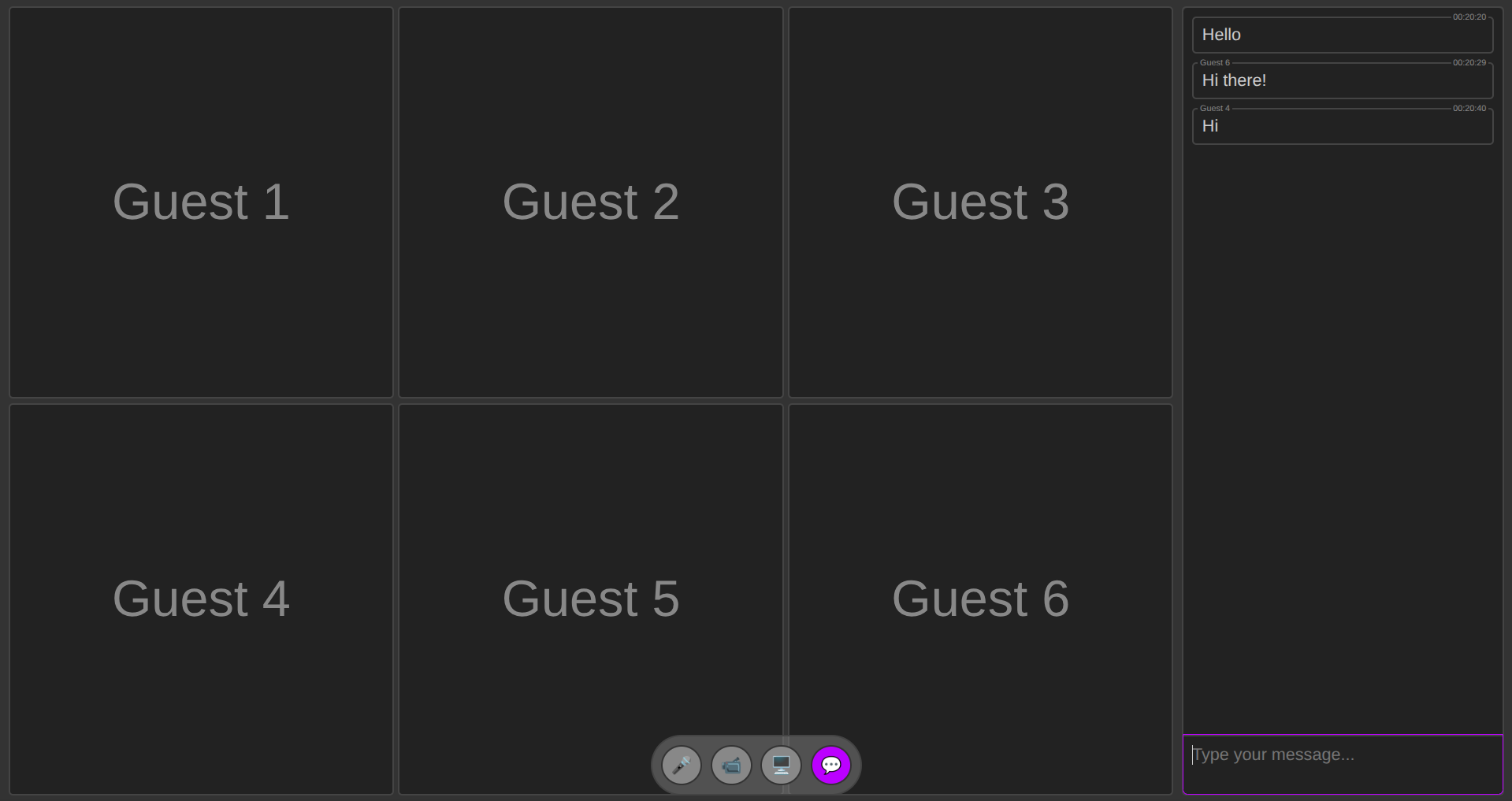

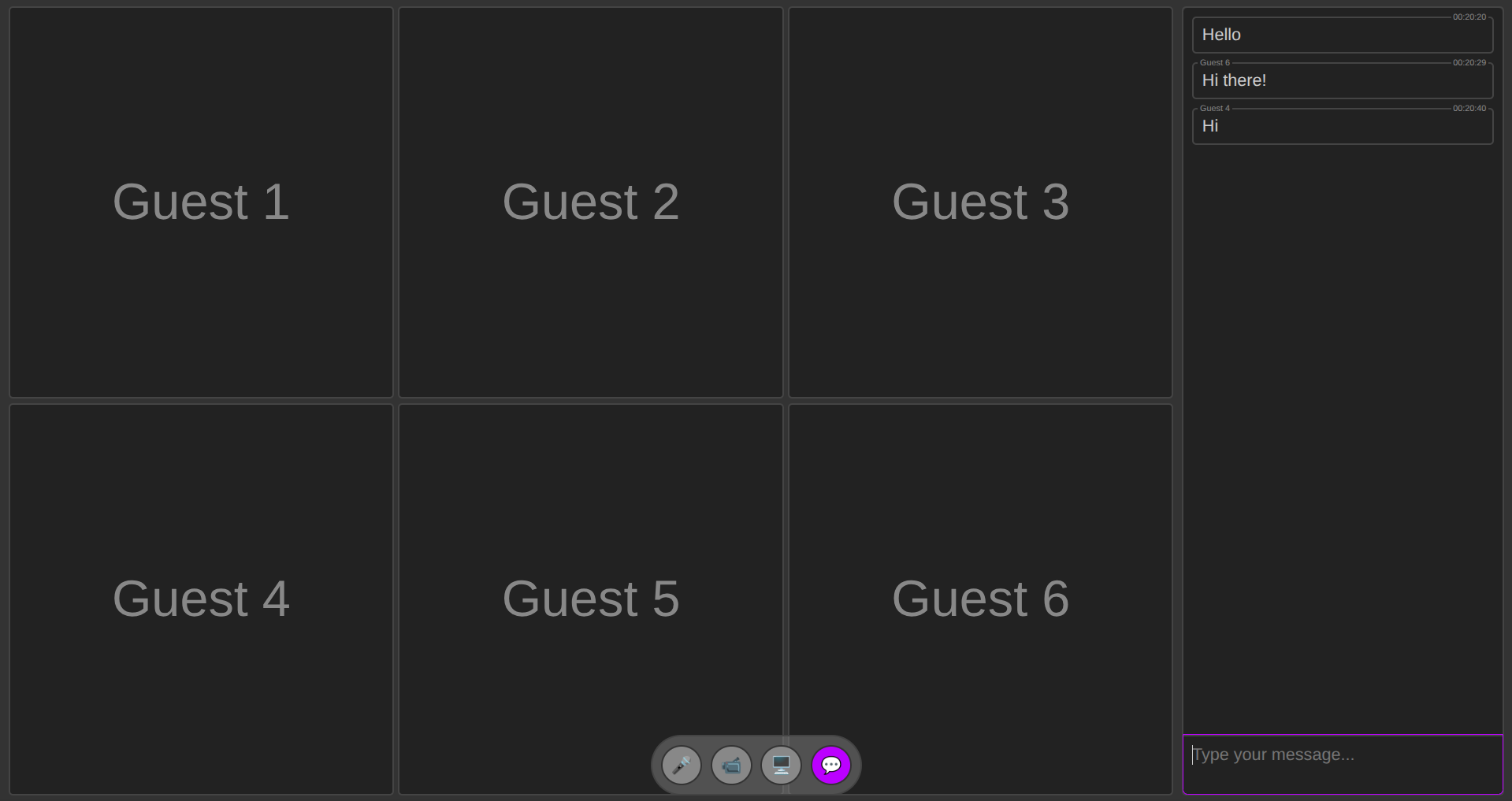

Building reliable, privacy-respecting peer-to-peer conferencing can be surprisingly simple when you split responsibilities cleanly: media transport (WebRTC) and signaling (a tiny transport for exchanging SDP and ICE). I built a minimal library to demonstrate that split and to enable serverless workflows using whatever signaling channel you prefer — from in-memory drivers for demos to NATS-based pub/sub for distributed apps.

This post describes the library’s purpose, core design, how to use it, and a practical example of a NATS signaling driver with end-to-end encryption using the browser Web Crypto API.

Just try it out: live demo | source code

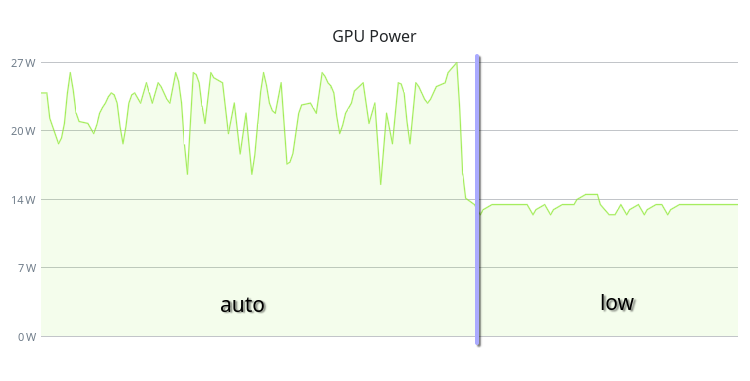

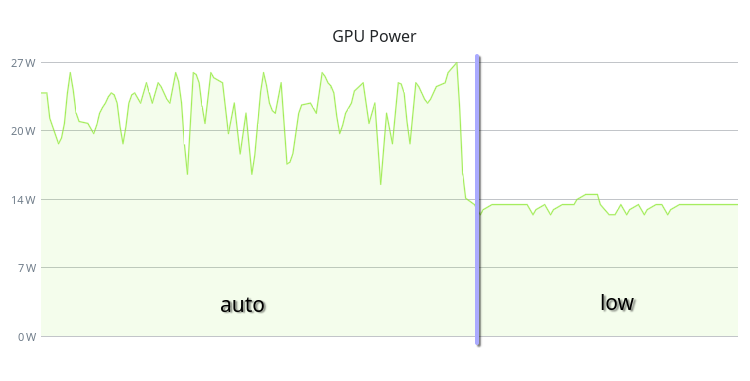

If you have a PC running Linux with an AMD GPU, you can change your GPU performance level. By default, the AMDGPU driver uses the “auto” performance level. But if you don’t need high performance, you can set it to “low” to reduce power consumption, heat generation, and fan noise.

On my system this change reduced the GPU power consumption from 30W to 15W in idle state and completely eliminated fan spinning.

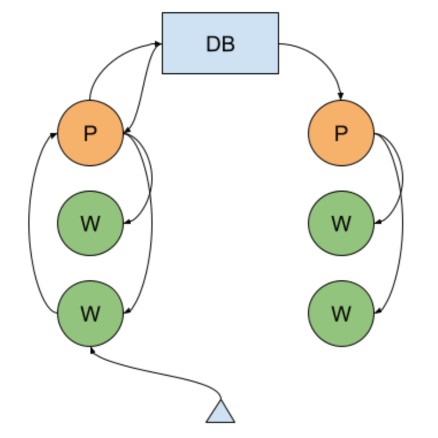

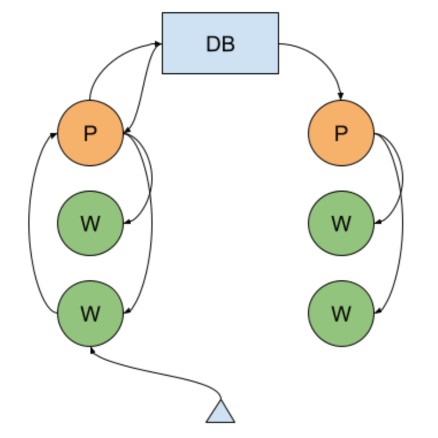

I am using Node.js Cluster app with MongoDB Replica Set in one of my projects. In the server architecture of the system, the MongoDB Change Streams mechanism is used to implement the horizontal scaling of real-time functionality (video communication, chats, notifications), which allows subscribing to changes occurring in the database. Previously, instead of this mechanism, I used data exchange over UDP directly between the application server hosts until our hoster, for an unknown reason, began to lose a significant portion of packets. Because of this, I had to abandon this method. For the last couple of months, I’ve been wondering how to optimize the operation of this mechanism in MongoDB, or even abandon it in favor of connecting an additional component like Redis Pub/Sub. But without a particular need, I didn’t want to multiply entities, Occam’s Razor, you know. Besides, figuring out what’s already there isn’t a bad idea to start with.

Tailwind CSS v4 was recently released, and with it came a problem when using the Shadow DOM. You can find the issue here: tailwindlabs/tailwindcss#15005.

Tailwind v4 uses @property to define defaults for custom properties. Currently, shadow roots do not support @property. Although it was explicitly disallowed in the spec, there is ongoing discussion about adding support: w3c/css-houdini-drafts#1085.

It is unknown if the developers will fix this issue. In this post, we will consider workarounds to address it.

In this post, I show a lightweight JavaScript approach to parse a connection string URI like MongoDB connection string. The code breaks down the URI into its components, including the scheme, credentials, hosts, endpoint, and options.

Input:

mongodb://user:pass@host1:27017,host2:27017/db?option1=value1&option2=value2

Output:

{

"scheme": "mongodb",

"username": "user",

"password": "pass",

"hosts": [ { "host": "host1", "port": 27017 }, { "host": "host2", "port": 27017 } ],

"endpoint": "db",

"options": { "option1": "value1", "option2": "value2" }

}

I like to use simple and useful own code instead of using external models. So I prepared the URI parser code on pure JavaScript, here it is.